Venture capital’s obsession with moats forces founders into a specific kind of artificial theater.

In pitch meetings, we invariably ask founders, "What makes your company defensible?" They always have the same standard slide that includes the usual suspects: proprietary data, fine-tuning pipelines, workflow lock-in, and vertical-specific models.

The assumption has been that if you cannot prove your moat on day one, you don’t have a business. This framing is fundamentally broken. Most AI startups rarely identify true moats at the earliest stages, and trying to manufacture them prematurely is a mistake.

Moats are forged over time by doing the thing. They are the scar tissue that forms over years of solving a problem. Instead of performing for VCs, founders should be obsessing over building a business of consequence.

The moat obsession is worse at AI startups

At the pre-seed, seed, and Series A stage, moats are mostly theoretical, and particularly in today’s LLM era, they are often hallucinations.

Model capabilities are moving too fast for a point-in-time advantage to hold. What looks like a differentiated product today is often just a prompt, wrapper, or user interface layer that can be replicated or made irrelevant by the next model release.

If your value proposition is handling long context or reducing hallucinations, you are effectively a bug fix for a model provider. When OpenAI or Anthropic ships its next update, your entire roadmap becomes a feature list for their next release.

Proprietary data is rarely as deep as founders claim, or does not actually compound. The advantages of fine-tuning tend to fade as base models improve. Even workflow lock-in is a weak defense if the underlying task becomes trivial for a generic agent to perform.

And yet, founders are pushed to explain defensibility and frame their early advantages as highly durable differentiation, while the ground is shifting beneath them. This creates a distortion where founders start optimizing for a position that might not exist in 10 months.

In the LLM era, moats are premature and are often illusions that distract you from building.

The more important question: will this layer exist?

There is a fundamental question that matters far more than defensibility in AI:

Will this layer of the stack still exist in 10 years?

The LLM ecosystem is still settling, and entire categories are being created and erased within days.

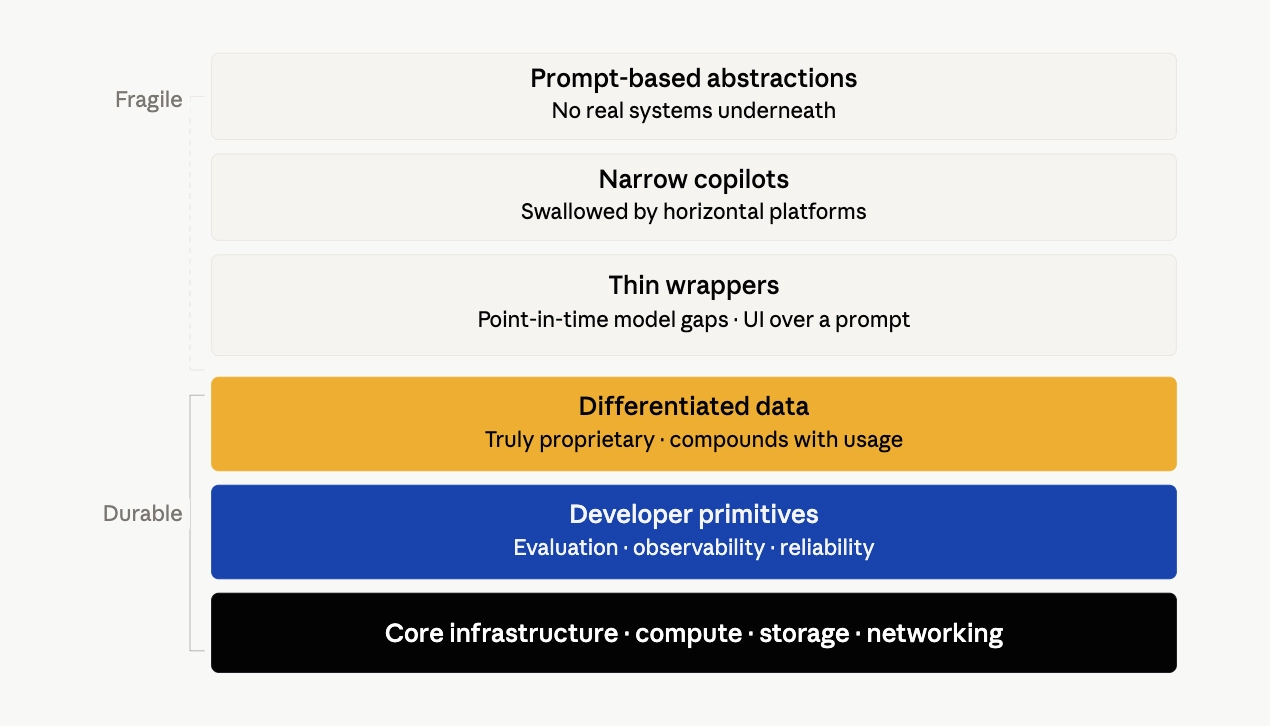

Some areas are clearly durable:

- Core infrastructure like compute, storage, and networking

- Developer primitives for evaluation, observability, and reliability

- Truly hyper-differentiated data that others are completely unable to attain

Other areas are far more fragile:

- Thin wrappers around model capabilities that just add a nice UI to a prompt

- One-off copilots for narrow tasks that are likely to be swallowed by horizontal platforms

- Prompt-based abstractions without real systems underneath

- Products that depend on current model limitations

Too often, companies being built today are focused on a point-in-time “moment” rather than a long-standing problem statement. And if your product depends on a model being bad at something today, you are betting against the smartest engineers in the world.

You want to solve a problem that remains a problem even when intelligence is a cheap, infinite commodity.

Why permanence compounds in LLMs

If you are building in a layer that persists, you get something incredibly valuable: time.

Time allows you to improve alongside the models rather than be replaced by them. When the underlying model gets 10x smarter, a permanent business becomes 10x more effective for its users. You get time to integrate so deeply into a customer's workflow that removing you would require a total operational overhaul.

Time also allows you to accumulate real usage data that compounds and becomes part of the infrastructure rather than something that sits alongside it. But if you are building on a temporary gap that exists only because models are not good enough yet, you are racing against the models themselves.

The reality is that the models are improving faster than you are, and a weak moat in a permanent layer today is better than a strong moat in a disappearing one. You can adapt to model progress, but you cannot survive a category collapse.

How moats actually emerge in AI

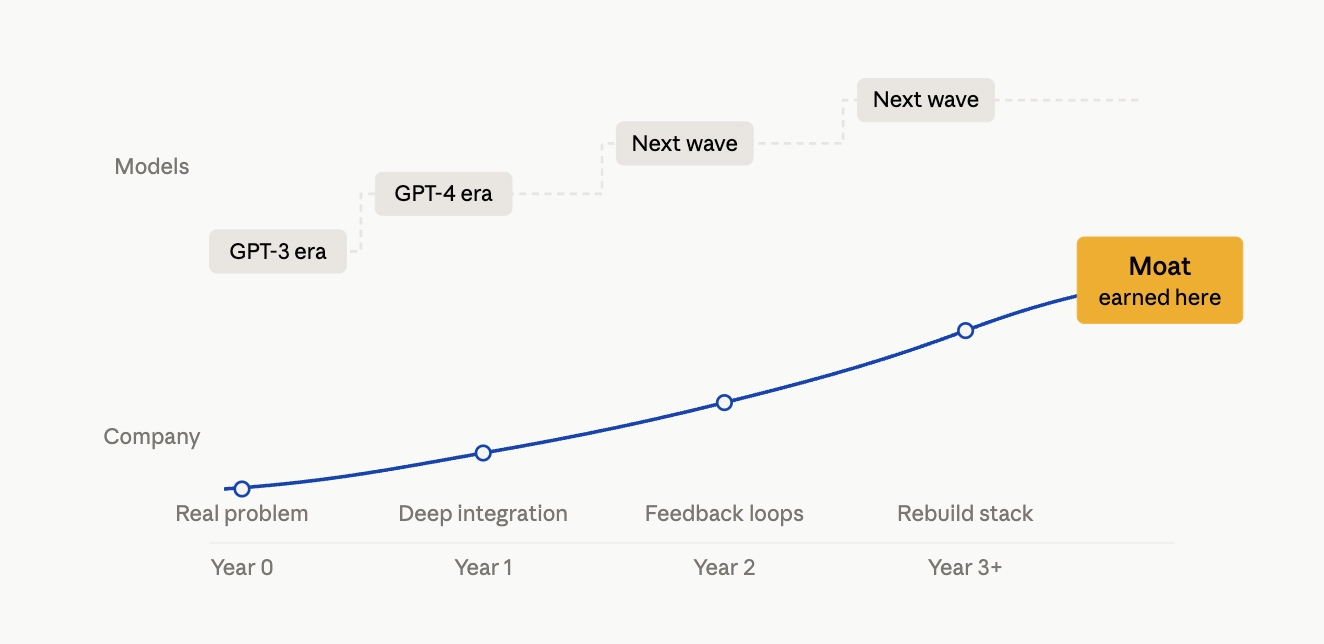

The common narrative is that great AI companies start with defensibility, but in reality, it’s the opposite. Defensibility is earned by surviving multiple waves of model progress.

The companies that endure start with:

- a real and persistent problem

- a deep integration into how people actually work

- tight feedback loops that improve the system over time

- a willingness to rebuild their entire technical stack as the underlying models evolve

Only later do the real moats appear. You get data that compounds because it is a direct byproduct of usage, build trust layers and reliability standards that a raw API cannot provide, and create an ecosystem around your tools or APIs that makes it hard to leave.

In the AI era, moats aren't designed; they’re meant to be tested over time.

A better way of building

Instead of asking what your moat is, founders should be asking what happens if the models improve dramatically tomorrow? Does your product become more valuable or less?

Look at your product roadmap to identify every feature that exists only because GPT-5.5 or Claude Opus 4.7 is currently not great at a specific task. If that feature is your primary selling point, you are building on a fault line.

Focus on building into systems of record by aiming for the parts of the stack that handle the messy, human, and regulatory realities of an industry. If you own the process and the data, a better model is just a better engine for your car.

Moats exist to help protect the valuable castle inside them, so focus on building that castle first.

*Portfolio company founders listed above have not received any compensation for this feedback and may or may not have invested in a SignalFire fund. These founders may or may not serve as Affiliate Advisors, Retained Advisors, or consultants to provide their expertise on a formal or ad hoc basis. They are not employed by SignalFire and do not provide investment advisory services to clients on behalf of SignalFire. Please refer to our disclosures page for additional disclosures.

Related posts

Why the future of Fintech belongs to industry-specific financial tools

The missing piece in robotics: A model of the world

The built economy: How vertical AI is unlocking the biggest untapped market in trades and construction

Build or buy? Navigating the new era of GTM AI Agents

The built economy: How vertical AI is unlocking the biggest untapped market in trades and construction